Wasteland Wrap-up #74

An experiment with ChatGPT, blowing up the world, a last chance, and questions about nuclear weapons and democracy...

These last two weeks have been good ones over here. I have had a few items of positive personal news that I am not quite yet able to make public, but will be shared as soon as possible.

The week before last, at the International Journalism Festival, I talked briefly with a journalist who left a major network/publication to become an independent journalist with an apparently huge Substack and YouTube channel. I asked them how that worked out the way it did? They gave a few answers (of varying levels of persuasion, for me — “luck” was not among them), that mostly came down to “hard work” (i.e., they do updates of everything all the time, daily, etc.). It sounded fairly hellish, I will admit; it is hard-enough for me to get done the things I want to get done and have a weekly blog entry, I cannot imagine trying to do it on a daily basis.

One of their other things they said was that they ran all of their writing through ChatGPT or some other LLM/AI service to make it punchier, identify typos, and otherwise improve the chances it would do something in the world. I am pretty opposed to using LLMs for written work for a lot of reasons, pride in the craft being one of them, but I admit the thought stuck in my craw. Because I do hate the typos that pop up in my writing online, a function of a fast schedule (weekly content that is mixed into a lot of other work) and the individual nature of it (there’s no staff over here!). And I do love when I can write for a venue that has a good copyeditor, because they always have a better sense than I do of how to cut and condense this stuff (I just find it all too interesting).

What if, I wondered, I experimentally uploaded my blog post for this week to ChatGPT and asked it to work its wonders? Would it be impressive? Would it make something that would give me that feeling of a good editor? Would it at least find my typos? Am I being too much of a curmudgeon about this stuff?

So I took this week’s blog post (linked below), put it into a MS Word file, and uploaded it to free version of ChatGPT. I told it to tighten up the writing and identify typos, but to not change its tone of voice to the insipid one that ChatGPT usually uses. “OK!” it said, insipidly, and then after a second of thinking gave me a new MS Word file with its edits.

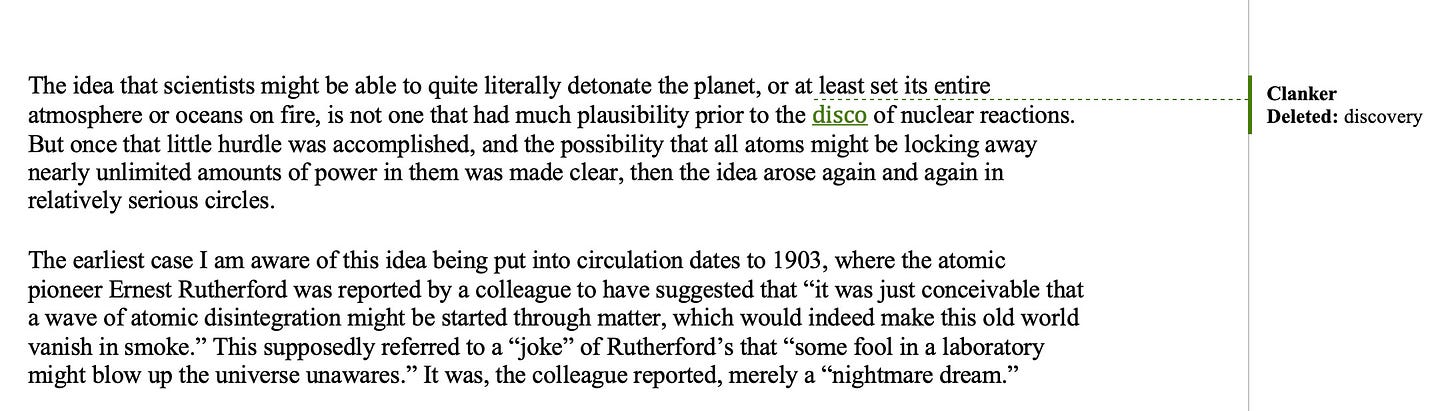

I told MS Word to create a composite version of my original file and the new file with the changes highlighted, so I could see its magic. It asked me who the author the changes should be listed as, and I suggested “Clanker.” It did identify a couple clear typos (I somehow misspelled “annihilation” once and ignored the red underline, I once I mis-typed Ellsberg’s name), but it also let a few through that I noticed on a re-read (e.g. “would bu” was left in, where it should have been “would be”).

But otherwise it almost changed nothing. A few words snipped here and there, but no actual editing of importance was done. Except for one, persistent change, that is so strange that I feel the need to provide a screenshot of it, because it seems so absurd:

That’s right, it changed the word “discovery” to “disco” multiple times in the document. “The disco of nuclear reactions.” “The disco of induced radioactivity.” “The disco of nuclear fission.”

I admit that I did laugh at loud, and then laughed at myself for ever thinking this was a good idea. Now, I am sure that it doesn’t always give results like this. And perhaps if I were paying for the more expensive version of ChatGPT it would do better. Or another service. But the whole thing reminded me why I am so loathe to rely on this kind of thing in the first place. Aside from the absurdity of wasting gobs of energy to query a statistical database full of copyright-infringed texts (including, I am positive, my own!) in a business model that seems guaranteed to destroy the economy no matter whether the predictions of its advocates come true or not, for an industry run by anti-human tech-bros who would set all of human culture on fire for a dollar and their science-fiction dreams… aside from all that, it also is just so often not that good.

Imagine giving a human being the task of editing this and aside from the lightness of it, they changed all instances of “discovery” to “disco.” You’d wonder if it was a joke. You certainly wouldn’t be encouraged to pay them anything. Or have them teach your children. Anyway, I am not going to be using LLMs in this capacity anytime soon, I don’t think — so I just hope that you will see my typos for what they are, a consequence of an all too human workflow!

Well, I am an AI grump, I know this. And of course I am participating in a selection bias, here. I have found a few uses of LLMs in my own life that, all of the ethical stuff aside, I find good-enough. They all involve uses that are very easy for me to check the veracity of the output of, like translating a statistical function to Javascript for me, which I can then look over the logic of, and test that it gives the correct outputs — even this often requires some back-and-forth as the LLMs make assumptions about what I want and diverge from instructions. Or translating English into French for the purposes of a formal e-mail (even then, I look it over closely).

None of these uses involve anything I enjoy doing, like writing or researching or even the more enjoyable parts of programming. And I would be happy to give them up in a heartbeat, really. But I simply do not understand why people would want to use them for things like that, but I suppose people come in all sorts of different packages…

The post for Doomsday Machines that I subjected to that experiment is the one I posted on Friday, about the topic of “atmospheric ignition,” that is, setting the Earth’s atmosphere (or oceans, or crust) on fire through an endless, out-of-control nuclear reaction:

You might think, if you are of a certain frame of mind, that you have read everything there is to read about that topic — e.g., that during World War II, the Los Alamos scientists contemplated whether they could set the atmosphere on fire, and did the math and found out they could not.

This is only part of the story, however. The trope goes back to at least 1903, as far as I can tell, and the actual “math” behind the ignition problem is more interesting than I think has been appreciated anyway. More importantly, as Dan Ellsberg emphasized in his 2017 book The Doomsday Machine, there is at the core a question that doesn’t go away once you conclude that you can’t burn up the world that way: a question of how much risk is acceptable for scientists to take.

I am not sure exactly what I will write about next week, but I stumbled across a very revealing document from the mid-1950s that shows how the generals thought about nuclear war at that time, so I may write something that centers around it… we shall see…!

Keep reading with a 7-day free trial

Subscribe to Doomsday Machines to keep reading this post and get 7 days of free access to the full post archives.