The idea that scientists might be able to quite literally detonate the planet, or at least set its entire atmosphere or oceans on fire, is not one that had much plausibility prior to the discovery of nuclear reactions. But once that little hurdle was accomplished, and the possibility that all atoms might be locking away nearly unlimited amounts of power in them was made clear, then the idea arose again and again in relatively serious circles.

“Only a nightmare dream”

The earliest case I am aware of this idea being put into circulation dates to 1903, where the atomic pioneer Ernest Rutherford was reported by a colleague to have suggested that “it was just conceivable that a wave of atomic disintegration might be started through matter, which would indeed make this old world vanish in smoke.” This supposedly referred to a “joke” of Rutherford’s that “some fool in a laboratory might blow up the universe unawares.” It was, the colleague reported, merely a “nightmare dream.”1

Rutherford’s collaborator, the chemist Frederick Soddy, also invoked the "nightmare” of death-by-transmutation in his public lectures. In a 1903 lecture on “Some Recent Advances in Radioactivity,” Soddy considered that:2

The knowledge [of radioactivity must] make us regard the planet on which we live as a storehouse stuffed with explosives, inconceivably more powerful than any we know of, and possibly only awaiting a suitable detonator to cause the earth to revert to chaos.

The next year, Soddy gave on radium the next year at the Corps of Royal Engineers, in which he suggested that:3

It is probable that all heavy matter possesses—latent and bound up with the structure of the atom—a similar quantity of energy to that possessed by radium. If it could be tapped and controlled what an agent it would be in shaping the world’s destiny! The man who put his hand on the lever by which a parsimonious nature regulates so jealously the output of this store of energy would possess a weapon by which he could destroy the earth if he chose. […] The fact that we exist is a proof that [massive energetic release] did not occur; that it has not occurred is the best possible assurance that it never will. We may trust Nature to guard her secret.

Soddy did not mention the possibility of either a weapon or global destruction in his 1909 book, The Interpretation of Radium, which is an interesting omission. The idea was resurrected and further popularized some years later, in May 1922, by the British chemist Francis William Aston, who was awarded the Nobel Prize in Chemistry that year for his work on isotopes. Aston gave a lecture at Philadelphia’s Franklin Institute in which he warned that:

Should the research worker of the future discover some means of releasing this [atomic] energy in a form which could be employed, the human race will have at its command powers beyond the dreams of scientific fiction; but the remote possibility must always be considered that the energy once liberated will be completely uncontrollable and by its intense violence detonate all neighbouring substances. In this event the whole of the hydrogen on the earth might be transformed at once and the success of the experiment published at large to the universe as a new star.

And Aston repeated this paragraph in his Nobel Prize acceptance lecture later that year. Aston’s lectures led to a spate of newspaper articles, not unlike an anonymous “Oxford scientist” of the same year who warned both of atomic bombs and possible global annihilation: “it is conceivable, too, that such terrific force might eventually be liberated as to blow up the world.”

Newspaper headlines globally ran headlines about these warnings: “Tinkering With Angry Atoms May Blow Up the Earth,” “Earth as a Bomb,” “Will the Atom Blow Up the World?” But dismissing this idea, in the 1900s-1920s, was, as Soddy illustrated, simple enough: if it was that easy to turn the world into a run-away nuclear reaction, then surely it would have happened at some point over the course of the Earth’s long history. What are the odds that humanity, that johnny-come-lately species, is going to be the one who set it all aflame?

And it was all still quite hypothetical, as humanity had no sign of this level of control over transmutation, no “detonator.”

“Atmospheric ignition”

This would change in the 1930s. The discovery of induced radioactivity in 1934 meant that the possibility of chain reactions was possible: that one nuclear reaction could lead to many more nuclear reactions. The discovery of nuclear fission in uranium in late 1938, and the fact that neutron-induced fission reactions produced additional neutrons, gave a practical way to accomplish nuclear transmutation at a whim. That the world had not already in the long past exploded from nuclear fission reactions suggests these reactions are not easy to start or propagate. And, indeed, the issue was quickly discovered: only some isotopes of uranium are easily fissioned, and most are not, and so it requires quite a lot of artifice to create the conditions for such chain reactions to occur.4

But the possibility of explosive nuclear reactions was, eventually, pursued for military purposes. And so we jump ahead to the famous meeting at the University of California, Berkeley, in July 1942, where J. Robert Oppenheimer assembled a coterie of nuclear physicists to discuss the theoretical basis for designing an atomic bomb, prior to the formation of the Los Alamos laboratory. And into this mix, the Hungarian physicist Edward Teller suggested a new nightmare: “atmospheric ignition.”

Accounts of the discussion vary. Daniel Ellsberg’s The Doomsday Machine (2017) contains an excellent summary of many of them.5 The gist is that Teller laid out the possibility that in the event that they created and detonated a bomb powered by an explosive uranium-235 fission chain reaction, it would produce, among other things, an intense and concentrated source of heat. This heat would be enough, he argued, to cause fusion reactions in light elements, like hydrogen. Teller’s primary interest, here, was the idea of the Super, or hydrogen bomb.

But this was linked with another idea, that these fusion reactions would also take place in the Earth’s atmosphere, notably hydrogen (in its water content) and nitrogen (which makes up 78% of our air). If the atomic bomb started such a fusion reaction in the atmosphere, and the fusion reaction was hot enough to cause additional fusion reactions in that same atmosphere, then a runaway fusion reaction would, within seconds, traverse the globe, burning everything, and leaving dead rock in its wake.

This kind of problem, to a nuclear physicist, is equal parts interesting and distressing. The assembled group, which aside from Oppenheimer also included the meticulous German physicist Hans Bethe — who would later win the Nobel Prize in Physics for his work in the 1930s in uncovering the fusion reactions that power stars — picked over Teller’s equations and reasoning. A fatal flaw was soon found: the heat of any fusion reactions created would dissipate quickly in the atmosphere. This would likely make such a run-away fusion reaction impossible, as the cooling would prevent it from spreading. Thus Bethe, nearly instinctively, judged the scheme as “impossible.”

“The ultimate catastrophe”

However, that this was considered far from proven. Oppenheimer himself subsequently traveled to Chicago talk with Arthur Compton, the Nobelist physicist running the Metallurgical Laboratory to create the world’s first nuclear reactors there, to share news of the possibility. Compton wrote of this some 14 years later in his memoir:6

I’ll never forget that morning. I drove Oppenheimer from the railroad station down to the beach looking out over the peaceful lake. There I listened to his story. What his team had found was the possibility of nuclear fusion—the principle of the hydrogen bomb. This held what was at the time a tremendous unknown danger. Hydrogen nuclei, protons, are unstable, for they could combine into helium nuclei with a very high temperature. But might not the enormously high temperature of an atomic bomb be just what was needed to explode hydrogen? And if hydrogen, what about the hydrogen of sea water? Might the explosion of an atomic bomb set off an explosion of the ocean itself?

Nor was this all. The nitrogen in the air is also unstable, though in less degree. Might it not be set off by an atomic explosion in the atmosphere?

These questions could not be passed over lightly. Was there really any chance that an atomic bomb would trigger the explosion of the nitrogen in the atmosphere or of the hydrogen in the ocean? This would be the ultimate catastrophe. Better to accept the slavery of the Nazis than to run a chance of drawing the final curtain on mankind!

This is, as Ellsberg later noted, quite a proposition: if the atomic bomb had any chance of killing the entire planet, then it was better to accept the possibility of a Nazi victory than total suicide. We will come back to this proposition, later.

In many later recollections, particularly in the 1970s when this issue became raised again, many Manhattan Project participants, like Bethe, outright dismissed the idea that they ever considered atmospheric ignition even a remote possibility. One finds this sentiment in many modern-day discussions of it. Ellsberg argued, and I think he is correct (for both his reasons, and others that I will come back to later), that this latter-day denial is bad history.

It is perhaps quite true that Bethe himself never took it seriously, for reasons that we’ll get to below. But it is also clear that there were some who did take it more seriously, and that even up to the moment of the Trinity test there were those who feared, in some small way, that the “ultimate catastrophe” might still be in the cards. And the insistence that this was not a legitimate concern was in part an effort to retrospectively paper over that any kind of uncertainty about a risk that might have been taken.

Because to acknowledge any sense of uncertainty on the issue is to suggest that these scientists were willing to take a secret gamble on humanity even more so than they did by merely ushering these weapons into the world. And to acknowledge that would be to undermine the story that the atomic scientists were telling after World War II that they were the responsible experts whose views should be taken seriously when shaping the future of the world.

In 1959, Compton told the writer Pearl S. Buck that:

scientists discussed the dangers of fusion but without agreement. Again Compton took the lead in the final decision. If, after calculation, he said, it were proved that the chances were more than approximately three in a million that the earth would be vaporized by the atomic explosion, he would not proceed with the project. Calculation proved the figures slightly less—and the project continued.

This suggests the issue was a more drawn-out discussion than the “never took it seriously” narrative would imply, and also the firm-but-arbitrary limit of three-in-a-million as the “acceptable odds” for this catastrophe. Those are indeed long odds, but not as long as winning lottery jackpots.

What was the source of these estimates? Teller did write up a paper in 1943 on the subject (cited in some literature as LA-001), but it has never been made available that I have seen. The internal, top secret Manhattan Project History, commissioned by General Groves, with its Los Alamos section written by the physicist David Hawkins and finished in 1947, describes the work thusly:

It should be mentioned at this point that in the early period of the project the most careful attention was given to the possibility that a thermonuclear reaction might be initiated in light elements of the Earth’s atmosphere or crust. The easiest to initiate, if any, was found to be the reaction between nitrogen nuclei in the atmosphere. It was assumed that only the most energetic of several possible reactions would occur, and that the reaction cross-sections [probabilities] were at the maximum values theoretically possible. Calculation led to the result that no matter how high the temperature, energy loss would exceed energy production by a reasonable factor. At the assumed temperature of three million electron volts the reaction failed to be self-propagating by a factor of sixty. This temperature exceeded the calculated initial temperature of the deuterium reaction by a factor of one hundred, and that of the fission bomb by a larger factor.

The impossibility of igniting the atmosphere was thus assured by science and common sense. The essential factors in these calculations, the Coulomb forces of the nucleus, are among the best understood phenomena of modern physics. The philosophic possibility of destroying the earth, associated with the theoretical convertibility of mass into energy, remains. The thermonuclear reaction, which is the only method now known by which such a catastrophe could occur, is evidently ruled out. The general stability of matter in the observable universe argues against it. Further knowledge of the nature of the great stellar explosions, novae and supernovae, will throw light on these questions. In the almost complete absence of real knowledge, it is generally believed that the tremendous energy of these explosions is of gravitational rather than nuclear origin.

It is not clear whether this perfectly reflects the reasoning of 1943-1945. Ellsberg interviewed Hawkins about the question in 1982, and says that Hawkins told him that he had “done more interviews with the participants on this particular subject, both before and after the Trinity test, than on any other subject,” and that the atmospheric ignition question was continually “rediscovered” by other, younger researchers, and had to be dismissed anew again and again. This suggests another origin of the early defensiveness about this topic — and part of the its seemingly conclusive inclusion in the Manhattan District History, which was explicitly meant to serve as a classified record of activities to be consulted in the event that there was a need for justifications of actions taken during the war. Hawkins would tell Ellsberg that by “impossibility,” he had really meant “for practical purposes,” as opposed to literal “impossibility.”

“A colossal catastrophe”

In March 1946, the question was raised from another source. In the months prior to Operation Crossroads, there was considerable public controversy regarding the safety of two underwater tests that were planned. One was a shallow underwater test (Baker), and the other was a deep underwater test (Charlie, which was ultimately cancelled for other reasons). These underwater shots appear to have generated similar fears about fusion ignition, this time of the oceans.

The Harvard physicist Percy Bridgman, whose high-pressure lab had generated data on plutonium’s equation of state during in the Manhattan Project, and who had received the Nobel Prize in Physics for 1946, was so alarmed by the idea that he wrote to Bethe on the subject, and his fears were forward to General Leslie Groves:

What is worrying to me is the possibility that if the bomb is exploded in the ocean the hydrogen may be converted to helium with an astronomical release of energy. […] If the history of physics teaches any one thing, it is that long-range extrapolations are hazardous. Even the best human intellect has not imagination enough to envisage what might happen when we push far into new territory. […] To an outsider the tactics of the argument which would justify running even the slightest risk of such a colossal catastrophe appears exceedingly weak. […]

If I am right in thinking that the tactics of the argument are weak, then it would be wrong to drop the bomb whether or not the ocean explodes. Suppose the bomb is dropped as [is] at present planned, the ocean does not explode, and later it should become known to the general public that the argument had been weak and that the scientists had permitted the taking of a stupendous chance without doing everything in their power to safeguard all possibilities. There might be a reaction against science in general which would result in suppression of all scientific freedom and the destruction of science itself.

This appears to me as cause for greater concern than the blowing up of the ocean, which after all would not very much affect a world of dead men.

That it was forwarded to Groves points to some concern about it. It is possible that someone like Groves might have been worried less about the reality of Bridgman’s fears, but about the way that having such a thing in writing could, inadvertently, bring Bridgman’s fears about later accountability into reality. How “weak” was the argument, and how would they be later judged if someone — including Bridgman himself — made this point publicly?

The immediate outcome from Bridgman’s letter appears to have been a “debunking” article by Hans Bethe, which appeared in the March 15, 1946 issue of The Bulletin of the Atomic Scientists. By this point, Bethe notes, there had been “much public discussion” about the possibility of atmospheric or oceanic ignition. Bethe says that the concern is “very well taken,” but is thoroughly discouraging of the idea. His basic argument is that because an atomic bomb contains a lot of non-reacting material — everything beyond the part that is fissioning — that will necessarily lower the temperatures that are transmitted to the atmosphere, and that the temperatures needed for fusion are very high indeed. He concludes that while there were unknowns, safety seems guaranteed by wide margins.7

It is an interesting and different argument than any of the other ones given here. He also, per his expertise, discourses at length on the conditions inside of stars, and how different they are from those on Earth, even those places that are near exploding atomic bombs. It is perhaps the best evidence we have for how Bethe himself would have thought about it, which is interesting because it is not how, say, Teller seems to have considered the question. Bethe’s answer from 1946 is far more categorical than any of the other ones offered up so far.

“The dangerous temperature”

The concerns about Crossroads might provide some context for the formal writing up of a paper tackling the atmospheric ignition problem by Teller, Emil Konopinski, and Cloyd Marvin, Jr., titled “Ignition of the Atmosphere with Nuclear Bombs” (LA-602), dated to August 1946.8 It might be, for example, the “due diligence” desired to show that the “argument” about ignition was, in fact, not weak. But it only concerns itself with atmospheric ignition (not oceanic ignition), so perhaps not. But it is otherwise not entirely clear why this was written up nearly a year after the Trinity test, particularly if there is an earlier paper from 1943 on the same subject.

The Konopinski–Marvin–Teller paper is extremely technical but not too difficult to follow if one is familiar with the basic terms. The question they try to tackle is, what would the easiest possible atmospheric ignition reaction be, and are there conditions under which it could ever occur, based on what was known at the time?

They assume that a nitrogen–nitrogen fusion reaction would be “easiest” fusion reaction to induce in the Earth’s atmosphere. They did not know how easy it would be to induce, because they did not know the probability of that fusion reaction under different conditions of temperature or pressure (they did not know its cross-section, in technical terms). Given these uncertainties, they advocated a risk-adverse philosophy: “because of the uncertainties in the knowledge of these processes, the policy should be adopted of exaggerating the dangers at any point which appears at all questionable.”

So they made some conservative guesses as to what seemed reasonable based on what they did know, and then then reasoned about how this reaction might propagate in the atmosphere if it started, balancing the processes that would produce heat, and those that would dissipate it.9

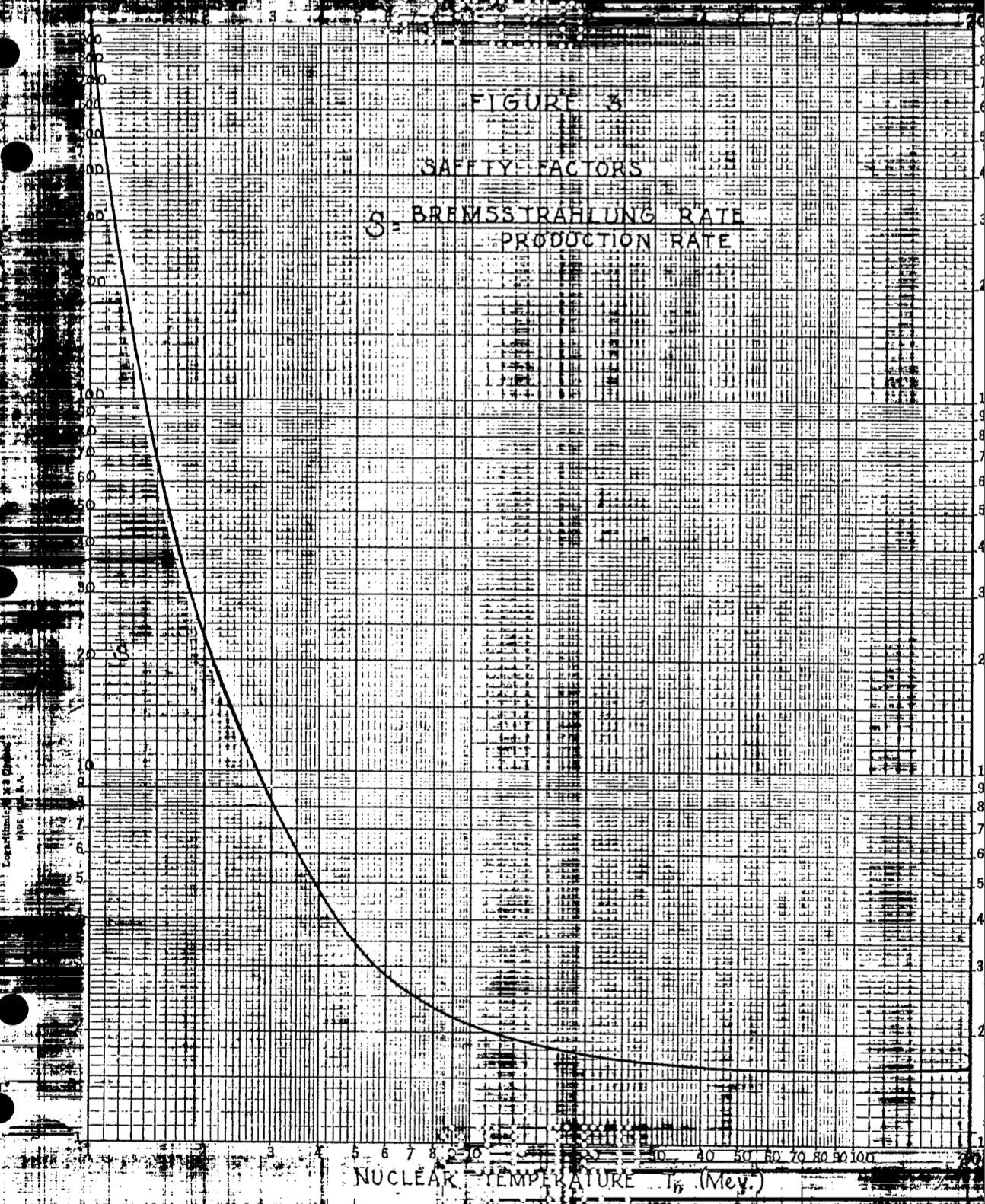

All of their results were that no reaction would propagate. A major question of interest for the paper, though, was how confident they were in that answer. This came in the form of a “safety factor,” the ratio of energy losses to gains. If that ratio of losses to gains is >1, then the reaction is a net loss — no propagation, no global thermonuclear apocalypse. If it ever gets close to 1, however, that is a problem. They performed their equations with different assumptions about how much energy was being generated by the bomb, and found that for all reasonable numbers, the “safety factor” was very safe indeed.

But they found that if the initial energy was high-enough, it would get to a “safety factor” that was only around 1.6. That was still “safe,” but was close-enough to 1 to indicate that any major errors in their understanding could be quite dangerous indeed. This corresponded to an initial temperature of 10 MeV, which was very hot indeed. They explained that this would be the total energy of a weapon on the order of 27,000 megatons was entirely pumped into the air as heat. In reality, they explained, most of that energy would not be converted into heat, so even this was unrealistic situation — a weapon over a million times more powerful than the weapons of World War II — was not very plausible.10

They continued, though, and suggested that if somehow the energy of 3 tons of fissioning material was dumped into a very small volume of air — a sphere with a 7 meter radius — that might get closer to the “dangerous” temperature of 10 MeV. This is still not that plausible: the weapons of World War II each fissioned only about 1 kilogram of material, so we are still talking about something thousand times more explosive, on the other of 50 megatons.11

But this much lower number, they noted, does start to get one into the mindset of Teller’s contemplate Super bomb, which was assumed to be possible of being thousands of times more powerful than the weapons used against Japan, and would also release more of its energy in a “useful” form for heating the air than the fission bombs. Thus a fusion reaction in “a sphere of liquid deuterium only 1 to 1.5 meter in radius may suffice,” which in the range of the future multi-megaton Super bombs being contemplated during the war were imagined to be.12

Still, even in this situation, the authors have faith that the cooling effects would win out. The abstract of the paper is categorical: “The energy losses to radiation always overcompensate the gains due to the reactions.” But the actual conclusion of the paper couches its work in more uncertainty than this:

There remains the distant possibility that some other less simple mode of burning may maintain itself in the atmosphere.

Even if the reaction is stopped within a sphere of a few hundred meters radius, the resultant earth-shock and the radioactive contamination of the atmosphere might become catastrophic on a world-wide scale.

One may conclude that the arguments of this paper make it unreasonable to expect that the N+N reaction could propagate. An unlimited propagation is even less likely. However, the complexity of the argument and the absence of satisfactory experimental foundations makes further work on the subject highly desirable.

Now, again, this particular paper was finished after the Trinity test, so its conclusion that the atmosphere would not be set aflame by Trinity was presumably considered pretty valid. In his memoirs, Teller says that he, Konopinski, and Marvin, “demonstrated” with their equations that “such a cataclysm could not occur,” but that the famed physicist Enrico Fermi was not satisfied:13

But when we had completed our proof, Fermi insisted that we go one step further. All we had proved, he pointed out, was that such an explosion could not occur according to known laws of physics. But what presently undiscovered phenomena might exist that, under the novel conditions of extreme heat, might magnify the consequences and lead to an explosion? Considering that question occupied my time immediately before the test. I explained the possibilities I had considered and asked for suggestions from everyone who would listen to me.

Fermi’s question is a bold one, and perhaps is the reason for the expressions of doubt, and desire for future research, at the end of the 1946 paper. After all, if all of this is new physics, what is to say that they’ve actually got it all figured out? They knew they didn’t even know the exact cross-section of the reaction they are talking about — what about the things they didn’t know they didn’t know?

In October 1945, Teller wrote up a short paper for Fermi about the possibility of the Super, as part of the postwar planning purposes. In it, he mentioned atmospheric ignition in the context of an even bigger weapon:14

Careful considerations and calculations have shown that there is not the remotest possibility of such an event [ignition of the atmosphere]. The concentration of energy encountered in the super bomb is not greater than that of the atomic bomb. In my opinion the risks were greater when the first atomic bomb was tested, because our conclusions were based at that time on longer extrapolations from known facts. The danger of the super bomb does not lie in physical nature but in human behavior.

Which is an interesting data point in Teller’s own thinking on the matter, and the question of the unknown unknowns.

“Disastrous consequences”

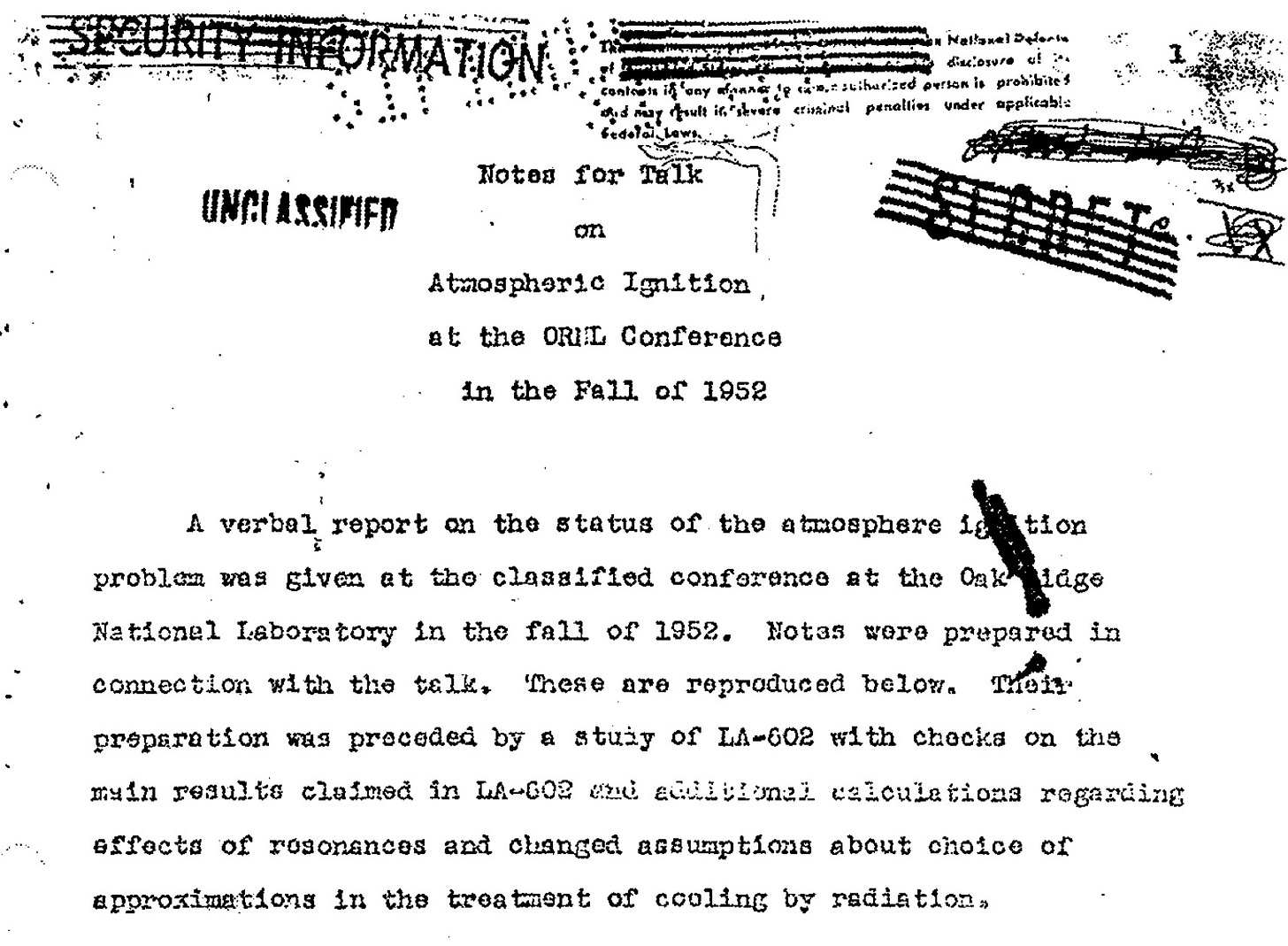

The matter was not, for Teller, apparently resolved completely. In 1951, as work on the hydrogen bomb was moving forward to the point of a planned test in late 1952, he asked the physicist Gregory Breit, then at the Yale University, to independently re-consider all of the calculations with fresh eyes. Teller apparently told Breit that “the considerations of the wartime period might have been too hurried,” and that the plans to test a Super “have increased the importance of the question.”15

By this point, much more was known about fusion reactions, and the fact that they were in fact much harder to start and propagate than Teller had imagined in the 1940s. Breit and his “group” of postdocs and graduate students tackled the problem and had answers by the fall of 1952, prior to the first megaton-range thermonuclear test, Ivy Mike.

The Breit group’s recalculation was able to substitute much more definite data than had been available in 1943-1946. They concluded that, as before, there was a “dangerous temperature” in which the possibility of energy gains might overcome energy losses, and even revised it downward from the original — they agreed with 10 MeV as a dangerous temperature, but thought it could possibly go as low as 5 MeV. But they noted that the energy of the bomb being contemplated (on the order of 10 megatons) were 500 to 1,000 times too low (only 10-15 keV). “In the fall tests,” they concluded, “there is no danger that one can see.” They considered as well that “although one does not know all the factors with certainty it is quite unreasonable to expect them to be off to a degree which would matter.”

Even here, though, they felt the need to add a bit of critique. The original paper, and perhaps their own, suffered from the possibility of unknown unknowns:

The theory of nuclear forces is still in a rudimentary stage and while it is generally agreed that very large effects of this kind are improbable it would be unfortunate if one were mistaken and if one were the cause of disastrous consequences through overconfidence in present day nuclear theory. Much of the latter [the report] is based on incomplete evidence. The attitude regarding what constitutes certainty in question under discussion Is necessarily different from that taken in deciding what constitutes a good Ph.D. dissertation problem or even what constitutes a suitable subject for inclusion in an ordinary bomb test.

We are back, again, at Ellsberg’s question: how much risk is allowable if you are talking about the possibility of global annihilation? Should “normal” scientific attitudes towards risk and uncertainty prevail, or are they inadequate when the risk of being wrong is so large? Can one know, in advance, how wrong the unknown unknowns could be? It is interesting to consider that in only a few years, the second American thermonuclear test, Castle Bravo, would become a major radiological accident in part because of the inability of scientists to predict the physics involved in the test. Even the Trinity test itself was several times more explosive than had been anticipated ahead of time.

“The necessary conditions”

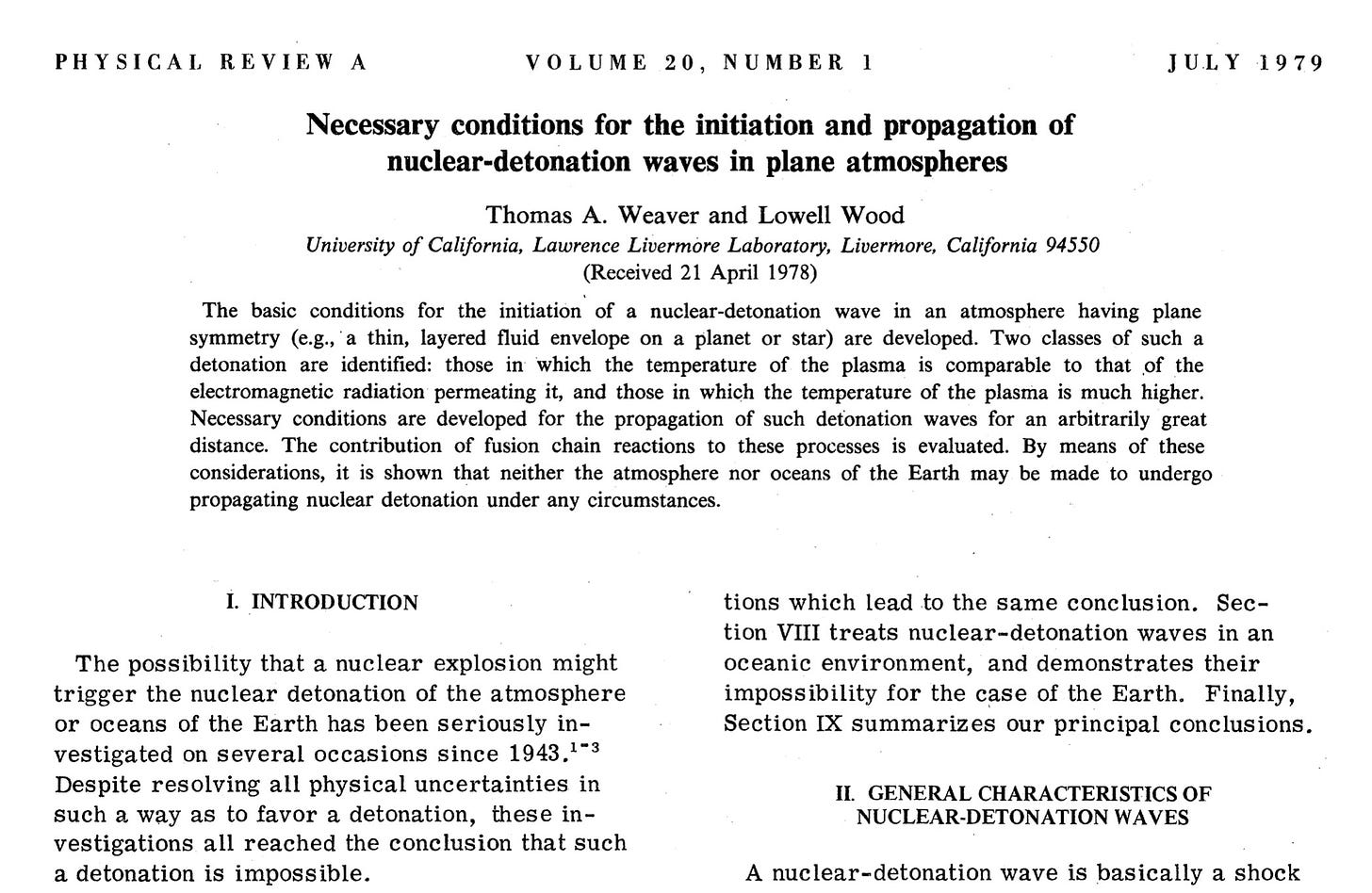

In the 1979, two Livermore scientists, Thomas A. Weaver and Lowell Wood, published a paper that re-studied the atmospheric and oceanic ignition problems with the benefit of many decades’ of data and advanced computing capabilities. Instead of asking whether kiloton- or megaton-range nuclear detonations could set the atmosphere of Earth on fire — they already knew it could not, plainly — they instead asked what the “necessary conditions” would be if you were to set off a runaway nuclear chain reaction in an atmosphere.16

They concluded, as I have written about in the past, that the “easiest” way for such a thing to happen would involve increasing the deuterium content of the ocean by twenty times, at which point it could be ignited by a 200 teraton (20 million megaton) explosion. Which is a very precise way to say “impossible.”

By this point, in 1979, I think it is fair to say that no scientists took seriously the possibility of atmospheric ignition. If anything, they were perhaps all too quick to dismiss it even retrospectively. By the 1970s, the idea of the paternal scientific expert giving wise counsel was coming under widespread attack as a result of the deep linkages that had formed between the scientific community, the weapons industry, and the perceived role that they played in enabling and perpetuating the Vietnam War.17

But it is worth returning to Ellsberg’s question, which, as we have seen, is echoed in many of the earlier discussions by the historical actors themselves: What level of risk is tolerable in this situation, particularly when these risks are being taken by agents who are not being subject to even the oversight of scientific peer review, much less democratic oversight?

Let’s say that we decide that Compton’s criteria of anything less than a 3 in a million chance is too much, when we are talking about killing everyone on Earth. One might still find that to be a dangerously risky proposition given the unknowns and the potential consequences — again, one-in-a-million odds events do occur, sometimes.

But let us imagine that we are at least somewhat comfortable to imagine that, under the circumstances of World War II or the early Cold War — where these bombs are hardly being developed and tested for mere interest but as part of a major global confrontation — that we might imagine that we could agree that these are somewhere on the order of magnitude of odds we might accept. One in a million. If the odds of atmospheric ignition were imagined by experts to be, say, one in a hundred, would the Trinity test have been vastly irresponsible? I think undoubtedly.

But here’s where someone like Ellsberg wants us to flip things around. It’s not just about atmospheric ignition. Ask yourself: what do you think the odds are for a nuclear war occurring in the next century? My sense is that most people who study this question seriously would consider them to be much more likely than one-in-a-million, and that the historical data we have — with a few very “close calls” within the first eight decades of the nuclear age — suggest that it might be very high indeed.

The fears of atmospheric ignition, in retrospect, seem perhaps quaint, and attackable by both mathematics and experience. But the underlying problem, the underlying question of how much risk is acceptable, seems to be as potent as ever.

William Cecil Dampier Whetham to Ernest Rutherford (26 July 1903), quoted in A.S. Eve, Rutherford (Macmillan, 1939), 102. The result of this correspondence shows up in Whetham’s popular science book, The Recent Development of Physical Science (Murray, 1904), on 243: “It is conceivable that some means may one day be found for inducing radio-active change in elements which are not normally subject to it. Professor Rutherford has playfully suggested to the writer the disquieting idea that, could a proper detonator be discovered, an explosive wave of atomic disintegration might be started through all matter which would transmute the whole mass of the globe, and leave but a wrack of helium behind. Such a speculation is, of course, only a nightmare dream of the scientific imagination, but it serves to show the illimitable avenues of thought opened up by the study of radioactivity.”

Quoted in Linda Merricks, The World Made New: Frederick Soddy, Science, Politics, and Environment (Oxford University Press, 1996), 33.

Quoted in Richard Rhodes, The Making of the Atomic Bomb (Simon & Schuser, 1986), 44.

An interesting aside, known to many who might be reading this article but perhaps not all, is that in the very distant past this was not necessarily the case. Much earlier in the Earth’s history, over a billion years ago, the concentration of the fissile isotope uranium-235 in the Earth’s crust was much higher, as opposed to the less than 1% that it is today. That is high-enough that if enough uranium was in the same place, and in the presence of a neutron moderator (like regular water), it could cause a “natural” nuclear chain reaction to take place. Evidence of one of these “natural nuclear fission reactors” was discovered in Oklo, Gabon, in the 1970s; its chain reaction occurred 1.7 billion years ago, when the U-235 concentration in the crust was 3.65%. The reconstructed reaction history at Oklo is quite interesting: water would seep into the uranium deposits, start the reaction, get heated by the reaction, and then boil away, stopping the reaction again. A few hours later, the minerals would cool to the point that water would seep back into them, and it would start all over again. This continued for several hundred thousand years. Even this was only possible when it was because the oxygen content of the Earth’s atmosphere had risen enough so that uranium would be soluble in water, which apparently requires oxygen.

Daniel Ellsberg, The Doomsday Machine: Confessions of a Nuclear War Planner (Bloomsbury, 2017), chapter 17. The one place where I think Ellsberg misses the mark is taking too seriously Fermi’s offer of taking bets on the possibility of atmospheric ignition at Trinity — clearly that was a joke, a bet you cannot collect on in one instance of it.

Arthur H. Compton, Atomic quest: A personal narrative (Oxford University Press, 1956), 128.

Bethe also, interesting to me, argued that out of all of the tests at Operation Crossroads, the only one that the scientists think is worth doing is the deep under water one, as that is the only one that is expected to provide new data, and so the specific calls for cancelling that test were ultimately misguided. The deep underwater “Charlie” test was eventually cancelled as a consequence of the “Baker” (shallow underwater) test creating a much larger radioactive hazard than expected, and waning interest and rising cost associated with the test series.

Emil Konopinski, Cloyd Marvin, Jr., and Edward Teller, “Ignition of the Atmosphere with Nuclear Bombs,” (14 August 1946), LA-602, Los Alamos National Laboratory, via Federation of American Scientists.

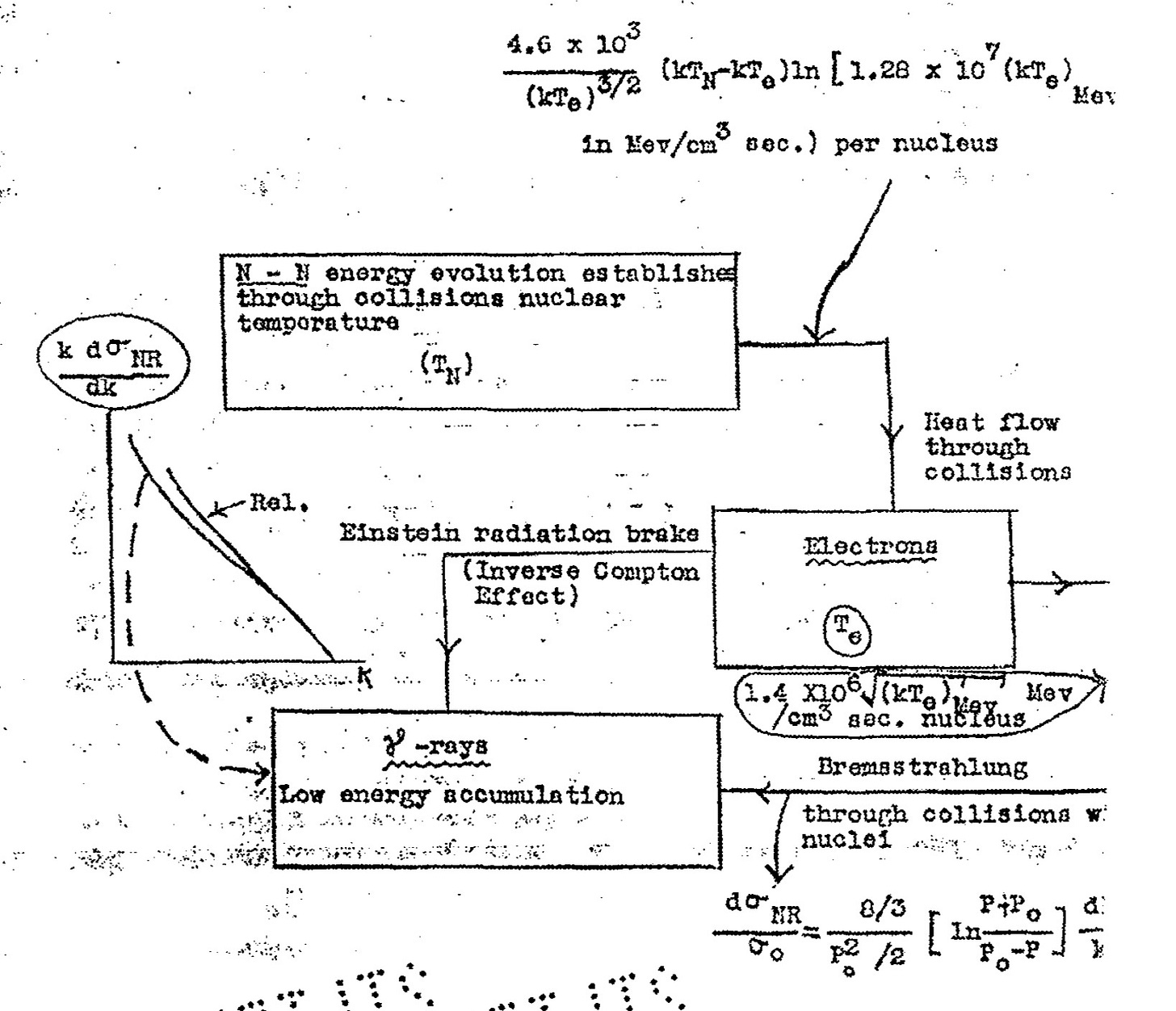

A chief “cooling” process that they knew about was at the time a closely-held secret, a phenomena known then as the inverse Compton effect, in which a high-energy electron can actually scatter off of photons and lose energy. I bring this up because, according to the Manhattan District History, the inverse Compton effect was only discovered in “the winter of 1943-44,” meaning it was probably not part of the argument against atmospheric ignition in 1942 or in Teller’s 1943 paper. Discussion of the inverse Compton effect is in fact redacted in the Los Alamos–Technical volume of the Manhattan District History, but other sources I have from the files of the Joint Committee on Atomic Energy indicate that it was is discussed in section 5.62. The Borden–Walker H-bomb chronology (1953) paraphrases parts of the MDH, and has a similar beginning to 5.62 in the MDH, suggesting to me it is based on i: “During the winter of 1943-1944 a theoretical obstacle was encountered loss of heat through so-called ‘Compton cooling.’ This factor directed attention toward tritium (hydrogen 3) as a further means of igniting deuterium. Two members of the British wartime mission to Los Alamos performed the most extensive new calculations concerning tritium and deuterium.”

Specifically, they say that they believe that every kilogram of fissile material that fissions produces 5e26 MeV per kilogram, and then say that this means that 1.5 million kilograms of material would need to fission to reach the dangerous temperature. The fissioning of 1.5 million kilograms of fissile material, at an average of 18 kt/kg, would release 27,000 megatons of energy.

Exactly how many tons is fissioning in this scenario is obscured in the scan that I have is pretty obscured but I believe it is 3 upon close examination. 3,000 kg of fissioning, at 18 kt/kg, comes out to 54 megatons of energy release.

From the Manhattan District History, section 13.20: “The end sought was a bomb burning burning about a cubic meter of liquid deuterium. For such a bomb the energy-release will be about ten million tons of TNT.” A sphere with a radius of 1 meter would have a volume of 4.2 cubic meters, so 42 megatons or so. There are some documents that make it clear that during this period they were imagining that the Super could be a weapon of 10 to 100 megatons, so this is in that ballpark.

Edward Teller with Judith Schoolery, Memoirs: A Twentieth Century Journey in Science and Politics (Perseus, 2001), 210-211. There is a very funny anecdote in relationship to the Trinity test here as well. Teller relates that the safety instructions they were given before Trinity included the warning to beware of rattlesnakes. While traveling to the site, Teller bumped into the physicist Robert Serber: “Knowing that he was also going to the test, I asked him how he planned to deal with the danger of rattlesnakes. He said, ‘I’ll take along a bottle of whiskey.’ Then I remembered that Bob was one of the few people with whom I had not discussed the question of what unknown phenomena might cause a nuclear explosion to propagate in the atmosphere. Because I took that assignment seriously, and even though officially the project had been completed, I proceeded to dish out the arguments and counter-arguments that we had considered. I ended by asking, ‘What would you do about those possibilities?’ Bob replied, ‘Take a second bottle of whiskey.’”

Edward Teller to Enrico Fermi (31 October 1945), Harrison-Bundy Files Relating to the Development of the Atomic Bomb, 1942-1946, microfilm publication M1108 (Washington, D.C.: National Archives and Records Administration, 1980), Roll 6, Target 5, Folder 76, “Interim Committee — Scientific Panel.”

The only document I know that gets into the details of the Breit group’s work is Gregory Breit, “Notes for Talk on Atmospheric Ignition at the ORNL Conference in the Fall of 1952” (January 1953). A copy is reproduced, along with some contextual notes from a member of Breit’s group, in McAllister Hull, “Work on the Super and the Study of Atmospheric Ignition,” in Vernon Hughes, ed., The Gregory Breit Centennial Symposium, Yale University, USA, 29 – 30 October 1999 (World Scientific, 2001), 271-280.

Thomas A. Weaver and Lowell Wood, “Necessary conditions for the initiation and propagation of nuclear-detonation waves in plane atmospheres,” Physical Review A 20, no. 1 (1 July 1979), 316-328. DOI: https://doi.org/10.1103/PhysRevA.20.316.

See, e.g., Stuart Leslie, The Cold War and American science: The military-industrial complex at MIT and Stanford (Columbia University Press, 1993), which discusses these trends.

Whenever I hear about an experiment that’s trying to “recreate the conditions of the early universe” I think of the possibility that universes last as long as it takes for intelligent life to develop and figure out how to run experiments that attempt to recreate the conditions on the early universe.

It is coincidental that today in Medium, Avi Loeb (yes, I know his views are controversial) published a piece on whether a nuclear weapon trying to deflect an extraterrestrial object headed for the earth could ignite an explosion that could destroy the earth instead, if the object contained an unusually high amount of deuterium, like 31/ATLAS: https://avi-loeb.medium.com/can-an-atomic-explosion-ignite-a-chain-reaction-of-deuterium-in-3i-atlas-543958fb3b9d